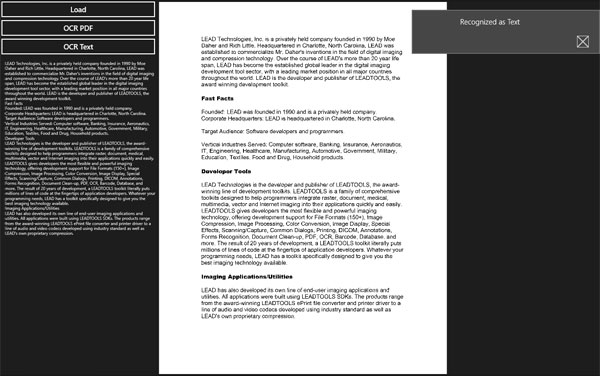

Orientation detectionĬurrently only available with Tesseract or Libtesseract. Text at all (depends on the OCR tool behavior). If the OCR fails, an exception pyocr.PyocrExceptionĪn exception MAY be raised if the input image contains no The default value depends ofĪrgument 'builder' is optional. DigitBuilder()Īrgument 'lang' is optional. # Digits - Only Tesseract (not 'libtesseract' yet !) digits = tool. # Beware that some OCR tools (Tesseract for instance) may return boxes # with an empty content. Only supported with Tesseract and Libtesseract (always 0 # with Cuneiform). To review, open the file in an editor that reveals hidden Unicode characters. It may or may not work on Windows, MacOSX, etc. It should also work on similar systems (BSD, etc). It has been tested only on GNU/Linux systems. That is, it helps using various OCR tools from a Python program. Confidence score depends entirely on # the OCR tool. This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. PyOCR is an optical character recognition (OCR) tool wrapper for python. For each line object: # line.word_boxes is a list of word boxes (the individual words in the line) # ntent is the whole text of the line # line.position is the position of the whole line on the page (in pixels) # Each word box object has an attribute 'confidence' giving the confidence # score provided by the OCR tool. For each box object: # box.content is the word in the box # box.position is its position on the page (in pixels) # Beware that some OCR tools (Tesseract for instance) # may return empty boxes line_and_word_boxes = tool. If 'DOB' in ntent or 'D.O.B' in ntent:ĭate = (' '.join((' ')), '%d, %b %Y')īuilder=pyocr.builders.# txt is a Python string word_boxes = tool. Print(box.content, box.position, box.confidence) Line_and_word_boxes = tool.image_to_string(īuilder=(tesseract_layout=4)įor i, line in enumerate(line_and_word_boxes): # myString is None OR myString is empty or blankĭef extract_info_from_image(image_path: str) -> Person: # myString is not None AND myString is not empty or blank # ret, bin_image = cv2.threshold(image_gs, 200, 255, cv2.THRESH_BINARY)īin_image = cv2.adaptiveThreshold(image_gs, 255, cv2.ADAPTIVE_THRESH_MEAN_C, cv2.THRESH_BINARY, 15, 5)

Return cv2.cvtColor(image, cv2.COLOR_RGB2GRAY) Return cv2.cvtColor(cv2.imread(path), cv2.COLOR_BGR2RGB)

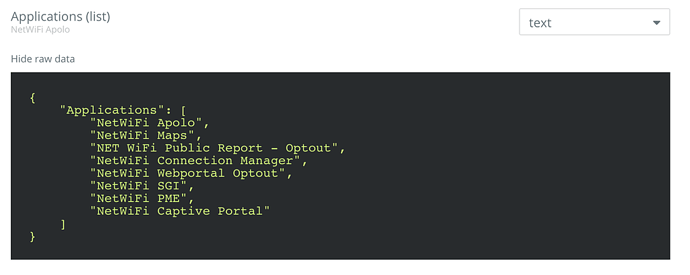

Here is the code I have working only with IBM cards for now: import datetimeĭef _init_(self, name: str = None, date_of_birth: datetime.date = None, job: str = None, ssn: str = None, Documents are also skewed and it would help if I would be able to deskew them in pre-processing.Īny general point or code snippets for specific parts of pre-processing would help.Other concern is how to make my code generically handle green document with white text (Google ID), white document with dark text (IBM ID) and light yellow document with gray and yellow text (Apple)? I could make three different algorithms but I would still need to decide which one to use on each image.I assume it would greatly help if I would be able tu crop documents from images and ignore backgrounds that way, but I'm not sure how to recognise document from a background, could contours help?.Tesseract 4.x is expecting dark text on light background, so I tried working with gray image, binary image, even inverted image, but I don't seem to find a solution that would work for all types of document I have. I'm limited to using OpenCV v3, Tesseract v4, Keras, Scikit-Learn and Python 3.6. I have three different types of ID cards on a different multicoloured backgrounds.įor each card I need to recognise name, company, job, date of birth and social security number of the owner. from PIL import Image import cv2 import sys import pyocr import pyocr.builders import time tools pyocr.getavailabletools () if len (tools) 0 : print ( 'No OCR tool found' ) sys.exit ( 1 ) tool tools 0 langs tool.getavailablelanguages () lang langs.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed